Evaluate one role through Evalio's governed job-evaluation entry point.

The Job Evaluation Trial is a bounded public proof corridor. You provide organisational context and role evidence, then receive a structured evaluation with a grade view, reference framework views, and a post-output review in the Decision Room. Internal methodology remains protected.

What to expect

- Organisational context — company size, market, industry

- Role evidence — structured intake, text, or document upload

- Bounded output — a governed grade view with supporting review context

- Decision Room review — confidence posture, coherence read, and next-step guidance

This is a bounded trial for one role. For broader analysis — multi-role governance, salary structure alignment, or market benchmarking — a guided discussion scopes the appropriate enterprise engagement.

Trial boundary

The Job Evaluation Trial is intentionally bounded. That is part of the trust. The trial runs under the same governed platform discipline available at enterprise depth for governed users, with a public-safe output structure. Protected methodology remains confidential.

Evaluation boundary

What you provide, what Evalio returns, what remains protected.

The Job Evaluation Trial is a contract between what you provide, what Evalio returns, and what remains protected. Reading the output well starts with understanding this boundary.

What you provide

Structured role evidence.

- Role evidence and organisational context.

- Confirmed authority, scope, and accountability signals.

- Confirmation where your evidence is thin or ambiguous.

What Evalio returns

A bounded, reviewable output.

- An indicative Evalio Grade view for the role.

- Bounded reference views across market grading frameworks.

- Review context: what the output rests on, where to look before action.

What remains protected

Internal methodology is confidential.

- Scoring, weights, thresholds, and formulas are not exposed.

- Evidence parsing, contradiction detection, and AI mechanics are not exposed.

- Framework conversion mechanics are not exposed.

Reviewability does not require exposing internal scoring. Protected methodology increases integrity — governance boundaries prevent misinterpretation and misuse.

Before starting the Job Evaluation Trial

Use the proof corridor with the boundary visible.

The Job Evaluation Trial is deliberately narrow: it is designed to prove governed evaluation posture for one role, not to replace an enterprise deployment or disclose protected methodology. That boundary is part of the trust model.

What the Job Evaluation Trial does

Takes organisational context and role evidence for one role, then returns a bounded, reviewable output with public-safe review context.

What the Job Evaluation Trial does not do

It does not make employment or pay decisions, does not stand in for a salary survey, and does not replace governed deliberation.

What requires review

Every output should be read against organisational context, role evidence quality, and accountable human judgment before any workforce action.

What remains protected

Internal scoring, calibration mechanics, factor weighting, and mapping logic remain confidential. The trial exposes the output boundary and review posture, not the engine behind them.

Confidentiality boundary

Reviewability does not require exposing Evalio's protected internal method. The public-safe output, evidence posture, and Decision Room review context are visible; protected mechanics remain confidential.

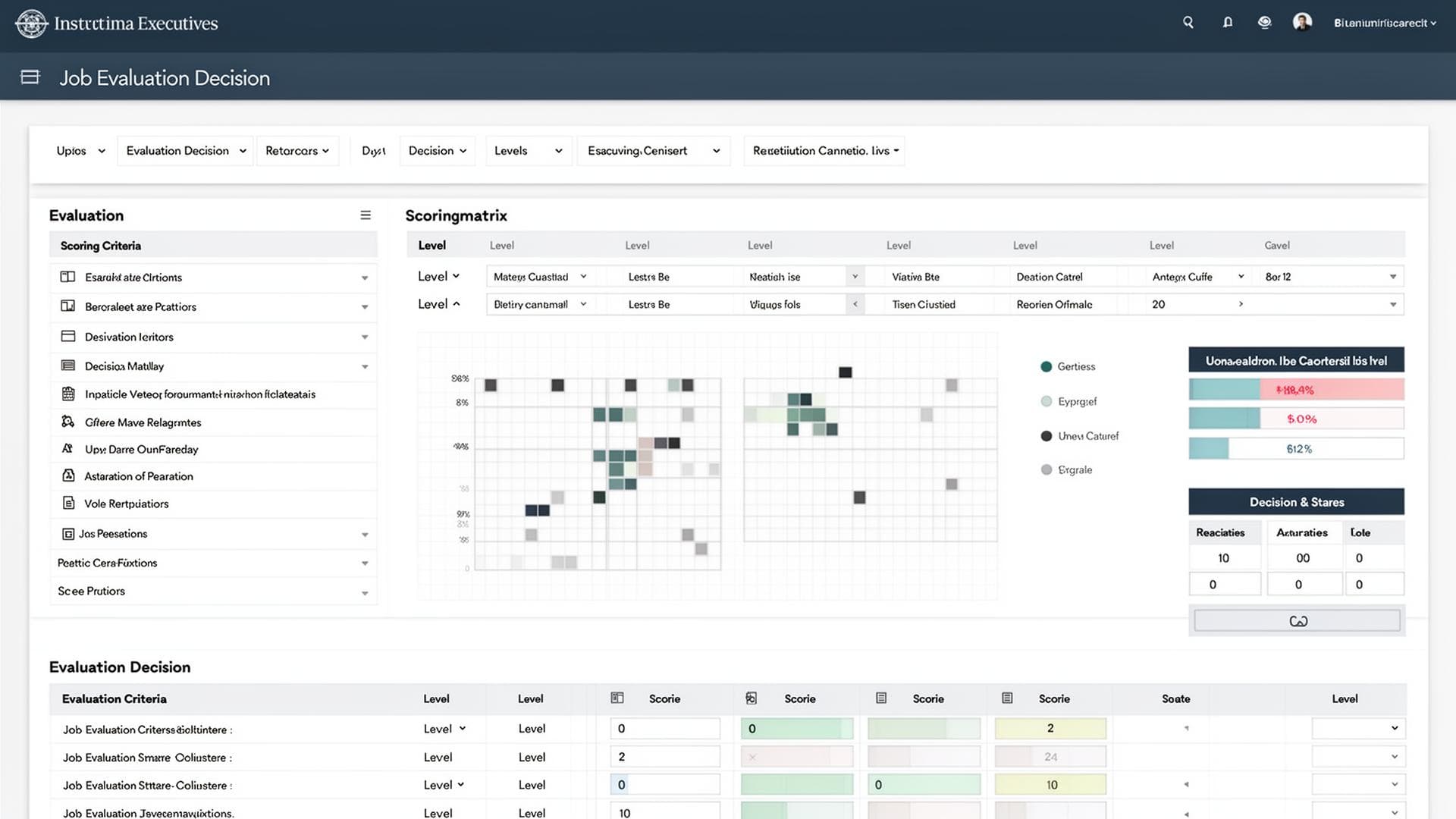

Release-0 bounded proof

A trial screen that shows what the result can responsibly support.

The Release-0 interface remains bounded: one role, structured intake, company-size context, Evalio Grade, framework equivalence, warnings, and Decision Room review. It connects the public proof path to the governed product estate without exposing protected method internals.

Role-evaluation review

Rewards lead and business sponsor examining a role artifact before using it in a workforce decision.

Trial experience preview

Example evaluation result

Governed outcome

G14

Evalio Grade

Example grade only. No raw score, model weights, or protected derivation trail is exposed on the public surface.

Public boundary

- Company size and role evidence are treated as visible interpretation context.

- Pay perspective stays indicative and non-survey; no fabricated market data is implied.

- Review, warning, locked, and gated states are presented as product states within the bounded proof corridor.

Doctrine depth

The product should be read as governed decision infrastructure.

These panels explain the operating logic behind Release-0 without exposing protected scoring values, weights, or proprietary derivation details.

Company-size interpretation

Company size changes the meaning of role weight.

Evalio does not read titles in isolation. The same title can carry different organisational consequence depending on scale, layers, market reach, and decision exposure.

Titles may carry broad scope but limited organisational weight. Evalio treats scale carefully so founder or early-team breadth is not mistaken for large-enterprise grade depth.

Functional ownership and manager titles are interpreted against narrower structural complexity, fewer layers, and more direct execution responsibility.

Role weight begins to reflect formal functions, layered accountability, cross-functional coordination, and more stable decision boundaries.

Scope is interpreted against multi-layer governance, group operating complexity, enterprise risk, and wider organisational consequence.

How to read an Evalio result

The result is a decision artifact, not just an output screen.

- 1Evalio Grade is the governed evaluation outcome; raw score and model weights are not public outputs.

- 2Framework equivalences are reference points for interpretation, not certification or formal conversion.

- 3Evidence and confidence signals determine how much weight the result should carry.

- 4Pay perspective is indicative and non-survey; it is not a compensation decision.

- 5Decision Room converts the output into a reviewable decision artifact before downstream action.

Evidence quality

Evidence quality controls how much weight the result should carry.

Strong evidence

Clear role purpose, accountabilities, people scope, decision authority, delivery complexity, and context are aligned.

Moderate evidence

Enough information exists for a directional grade, but some interpretation may depend on missing scope or unclear boundaries.

Thin evidence

The result can be useful for early diagnosis, but it should not carry heavy decision weight without strengthening the role evidence.

Contradictory evidence

Signals conflict. Decision Room should be used to identify whether the job description, title, or context needs correction before reliance.

Framework equivalence boundary

External framework references support interpretation; they do not replace governance.

Hay / Korn Ferry, Mercer IPE, WTW GGS, and Aon references are indicative alignment points. They help users understand relative positioning, but they are not formal certifications, proprietary framework conversions, or survey claims.

Pay perspective boundary

Indicative pay perspective is not a market survey or a compensation decision.

The pay perspective is an early discussion frame derived from Evalio Grade and company-size context. It does not represent local market benchmarking, internal equity analysis, budget approval, or final salary decision.

Job Evaluation Platform — Trial Experience

Structured role evaluation in four steps.

Provide organisational context, describe the role, confirm the structured draft, and receive a deterministic evaluation with an indicative grade and Decision Room review.

01

Organisation

02

Intake method

03

Role definition

04

Confirmation

05

Evaluation

1. Organizational context

The evaluation begins with organizational context because a role cannot be interpreted credibly in isolation. Company size comes first because organisational scale changes the weight, complexity, and reach of what the role is accountable for.

Why context comes first

A role cannot be evaluated credibly in isolation. Company size determines the weight and complexity of what the role is accountable for. Location and industry provide the operating context. These shape the evaluation before any role detail is entered.

Live proof is the strongest signal of seriousness.

Most platforms demonstrate capability through slides, marketing language, or gated previews. Evalio takes a different position: the Job Evaluation Trial is publicly available — producing real structured outputs under a public-safe output boundary that any serious buyer can examine, interpret, and challenge without a sales conversation.

The market for workforce decision infrastructure is saturated with assertions. Defensible job levelling, structured evaluation, and auditable decision records are frequently claimed but rarely demonstrated. The Job Evaluation Trial — including a Decision Room designed to help you review what each output rests on — closes that gap.

A working product with bounded access is more credible than a broad platform that is described.

What the Job Evaluation Trial proves.

Structured job evaluation is not theoretical. It is a working system that takes defined inputs, applies a governed method, and produces a reviewable output with public-safe output and review context. Internal methodology is protected by design.

Deterministic posture is demonstrable

Under the same inputs and criteria, the Job Evaluation Trial returns the same public-safe output. No black-box variance, no evaluator-dependent drift.

Structured multi-criteria evaluation holds up

Roles read across structured role-evidence dimensions produce a bounded output that withstands comparison and challenge. Internal logic is not exposed.

Decision records can be institutional-grade

Every evaluation produces a governed record capturing inputs, stated method, bounded output, and review context — with a Decision Room that helps stakeholders review what the output rests on.

Live proof replaces marketing claims

The Job Evaluation Trial is publicly accessible. Any serious buyer can examine outputs, review context, and stated boundaries without a sales conversation.

What the Job Evaluation Trial does.

Each capability is live, governed, and produces a bounded, reviewable output. Not planned features — working functions of the current public trial surface.

Structured job evaluation

Every evaluation follows a governed method under a public-safe output boundary, producing consistent outputs regardless of evaluator — with review context. Internal methodology remains protected.

Structured multi-criteria evaluation

Roles are read across structured role-evidence dimensions, producing a bounded, reviewable output without exposing internal mechanics.

Grade view

A governed grade view emerges from the structured evaluation (protected internal logic) — not assigned by judgment — producing a reviewable, explainable output.

Decision record generation

Every evaluation produces a structured record capturing inputs, stated method, bounded output, and review context — reviewable before decisions take effect.

Cross-role comparison

Outputs are comparable across roles under the same public-safe output boundary, enabling a fair, reviewable, and interpretable grade view across the organisation.

What the Job Evaluation Trial does not cover.

The trial provides a bounded proof experience of Evalio's Job Evaluation Platform under the same governed platform discipline used at enterprise depth, with a public-safe output structure. The following are part of the broader platform:

Job architecture design

Role family structures, career tracks, and organisational taxonomy.

Pay alignment

Compensation frameworks connected to evaluation and market positioning.

Multi-regional governance

Governed consistency across regions and structural coherence enforcement.

Workforce decision workflows

End-to-end decision flows spanning architecture, evaluation, and pay.

The limitation is access scope, not methodological seriousness. The Job Evaluation Trial runs under the same governed platform discipline as the full platform, with a public-safe output structure. Protected internal logic remains confidential.

How the Job Evaluation Trial connects to the broader system.

The Job Evaluation Trial is the public entry into the evaluation domain within a larger connected decision environment. The full platform links job architecture, evaluation, and pay alignment into a single system — so every workforce decision traces from input to output under a public-safe output boundary, with interpretive clarity. Internal methodology remains protected.

After evaluation

Continue based on what your output reveals.

Decision Room

Canonical post-output review for stronger artifact readback and confidence assessment.

ExtendedExtended Review

A deeper secondary corridor after Decision Room review for readiness and governed handoff.

EngageTalk to Evalio

Guided discussion for interpretive or decision-specific follow-up.

Proof-first buyer journey

From public proof to governed enterprise activation.

The journey connects orientation, the Job Evaluation Trial, Decision Room review, trust review, enterprise scoping, and governed Total Rewards activation in one ordered path.

- Step 01Orient

Orient through proof

Start with the teaser and demo library so the category, posture, and proof boundary are clear before trial use.

- Step 02Trial

Start the Job Evaluation Trial

Run one governed job evaluation under a public-safe output boundary, without exposing protected mechanics.

- Step 03Review

Review the Decision Room

Read the result basis, caveats, reference views, and indicated next step as a review surface, not a scoring trace.

- Step 04Trust

Check trust and methodology

Understand what is reviewable, what is protected, how outputs should be used, and why the boundaries exist.

- Step 05Engage

Scope the enterprise corridor

Move from public proof into a governed discussion about workspace activation, roles, countries, and operating scope.

- Step 06Operate

Activate governed modules

Compensation architecture, salary structures, internal equity, merit, offer, and workforce planning activate only through governed entitlement.